Vertica does not support LZO compression for these formats. Vertica supports all simple data types supported in Hive version 0.11 or later.įiles compressed by Hive or Impala require Zlib (GZIP) or Snappy compression. This is the reason parquet is the default file. ORC or Parquet files must not use complex data types. To begin with Apache Spark is optimized for Parquet file format and Hive is optimized for ORC file format. If you export data from Vertica, consider exporting to one of these formats so that you can take advantage of their performance benefits when using external tables. If you have ORC or Parquet data, you can take advantage of optimizations including partition pruning and predicate pushdown. External tables with ORC or Parquet data therefore generally provide better performance then ones using delimited or other formats where the entire file must be scanned. The files contain metadata that allows Vertica to read only the portions that are needed for a query and to skip entire files. ORC and Parquet, like ROS in Vertica, are columnar formats. The best format for performance is parquet with snappy compression, which is the default in Spark 2.x. Spark can be extended to support many more formats with external data sources - for more information, see Apache Spark packages. These formats are common among Hadoop users but are not restricted to Hadoop you can place Parquet files on S3, for example. Spark supports many formats, such as csv, json, xml, parquet, orc, and avro. Among them, Vertica is optimized for two columnar formats, ORC (Optimized Row Columnar) and Parquet. Use Spark DataFrameReader’s orc () method to read ORC file into DataFrame. I expected a much higher compression ratio.You can create external tables for data in any format that COPY supports. In this case, I see that 43.0MB are used. Spark natively supports ORC data source to read ORC into DataFrame and write it back to the ORC file format using orc() method of DataFrameReader and DataFrameWriter. In a second case, I create records so that most of the values of i and j are 0. In this case, I see that 89.4MB are used. In one case, I create records so that all the values of i and j are unique. : false: When true, the ORC data source merges schemas collected from all data files, otherwise the schema is picked from a random data file. orc (path, mode, partitionBy, compression) Saves the content of the DataFrame in ORC format at the specified path. Enables vectorized orc decoding in native implementation. I noticed that while storing ORC file I did not provide compress option and I used option(compression, snappy) while saving the file and it appears the.

options (options) Adds output options for the underlying data source. create table employeeorc (empid string, name string) row format delimited fields terminated by t stored as orc tblproperties ('orc. Since Spark 3.2, you can take advantage of Zstandard compression in ORC files on both Hadoop versions.

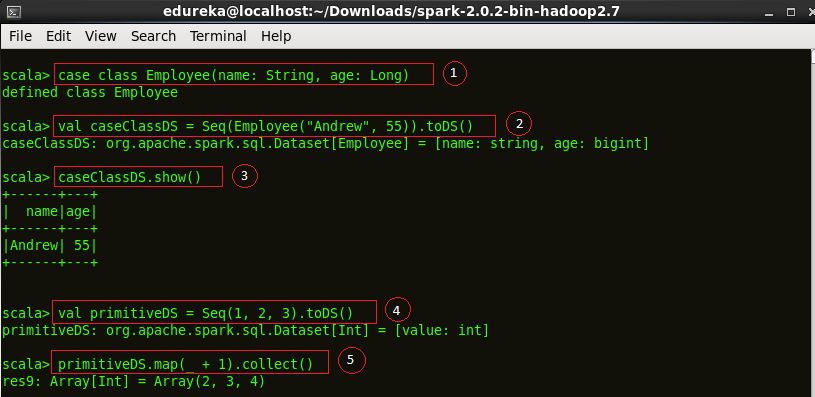

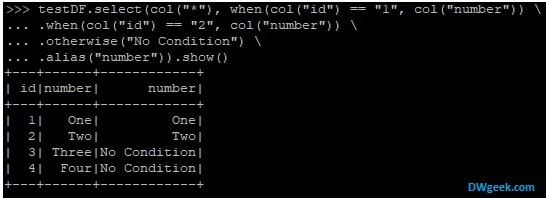

option (key, value) Adds an output option for the underlying data source. Zstandard Spark supports both Hadoop 2 and 3. Val records = // create an RDD of 1M Records Specifies the behavior when data or table already exists. Val conf = new SparkConf().setAppName("Simple Application")Ĭonf.set("", "true") The following are snippets of my test code: case class Record(i: Int, j: Int) However, doing so doesn't seem to make any difference in my memory footprint. The CREATE statements: CREATE TABLE USING DATASOURCE. I can make sure that Spark is store my my dataset as a compressed, in memory, columnar store?Īt the Spark Summit, someone told me that I have to turn on compression as follows: t("", "true") CREATE TABLE statement is used to define a table in an existing database. I've been told that compression of the columnar store is implemented but currently turned off by default. Files compressed by Hive or Impala require Zlib (GZIP) or Snappy compression. I understand that SparkSQL now supports columnar data stores (I believe via SchemaRDD). You can create external tables for data in any format that COPY supports. I'd like to user Spark on a sparse dataset.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed